Projects

DFG/SFB 1410 Project - C03 Learning Human-like Trajectories for Whole-Body Motions

Content

Digital embodies, like robots, will appear in many shared environments in the near future. In contrast to virtual avatars, humanoid robots have an impressive presents for humans interacting with them. This leads to a more careful interaction than with virtual avatars. Human-like motions for humanoid robots increase their acceptance in shared workspaces and in the society. For humanoid robots it is not sufficient to look like humans, people also want to see predictable motions, which are supposed to fit their expectations. This is an important step to increase their acceptance. Human-like motions will avoid the so-called “Uncanny Valley”, which describes the feeling of seeing a living corpse. Thus, the goal of this research project is the development of new methods to achieve functional, collision free and human-like motions for humanoid robots.

Project period

01.01.2020 - 31.12.2023 extended until 30.6.2024

Is funded by

DFG/SFB 1410 Project - C04 Highly Dexterous Telemanipulation with Haptic Feedback and Shared Autonomy

Content

Telemanipulation with robots will gain more attention in the future. Remote manipulation transmitted over large distances will enable dexterous manipulation in dangerous situations or in areas inaccessible for humans. Applications may range from space-robotics to medical telerobotics. The removal of mines or rescue operations after earthquakes demand for telemanipulated robots as well. Furthermore, concepts for telemanipulation can be adapted to control a virtual avatar with full body motions. The main research questions to focus on are, how can we transmit and reproduce sensor information as accurately as possible to let operators of telemanipulated systems feel like they are physically at the remote place, and how can we support teleoperated manipulation by a blend of shared autonomy and manual input? When we manipulate objects, haptic interaction plays a key role. Thus, the first purpose of this project is to create the feeling of real physical contact using a remotely operated machine. Our main aim is to improve the controlled behavior of systems with a long-distance communication between humans and a teleoperated robot by using telemanipulation combined with haptic feedback.

Project period

01.01.2020 - 31.12.2023

Is funded by

Completed Projects

I-RobEka: Strategies of interaction for a robotic shopping assistant: methodic basics of interaction and navigation

Content

This research project focuses on scenarios in which autonomous mobile robots can serve as shopping aid for customers. The shopping scenario is every day situation for human going to by groceries in a supermarket. While robots give support to humans interaction between humans and robots arise in a complex and dynamic environment. Therefore, basic skills for interaction will be developed to give situational dependent support. Communication between robots and humans are manifold, it will be established through gaze, speech and pointing instructions. In general, we will develop interaction strategies as conceptual and technical basis for a social, contextual, multi-channel interaction between humans and robots in future shared environments like a supermarket. Thus, the guiding principle of the project is the development of an adaptive interaction concept for service robots.

(Website)

Project period

01.04.2018 - 30.09.2021

Partner

LUNAR GmbH, Innok Robotics GmbH, Toposens GmbH, Technische Universität Chemnitz (Zentrum für Virtual Humans), Professur für Graphische Datenverarbeitung & Visualisierung, Professur Robotik und Mensch-Technik-Interaktion, Professur Prozessautomation, Professur für Medieninformatik

Is funded by

BMBF

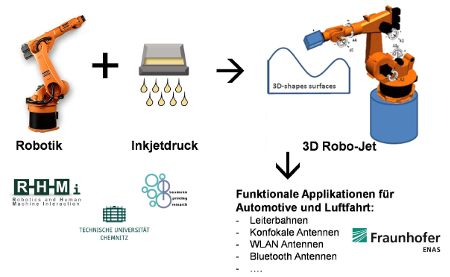

3D-ROBOJET: Inkjet printing on 3D surfaces

Content

The demand for innovative and individualized components and products is growing across industries. Digital manufacturing technologies such as inkjet printing technologies are needed to manufacture individualized products cost-efficiently under competitive market conditions. The inkjet printing technology allows the production of digital print images, which greatly reduces machine set-up times. The project proposed here is based on the need for the use of inkjet printing technology for the individualisation of three-dimensional components/products, with the aim of developing the necessary technology to apply robot-supported additional functions such as printed circuits or antennas to three-dimensional objects.

Project period

01.03.2019 - 31.07.2021

Partner

Fraunhofer ENAS, ZFM

Is funded by

AugBot: Support of human-robot-interaction with the help of augmented-Reality-glasses

Content

Human-Robot Interaction (HRI) is an important element of digitalization and its increase in flexibility of the modern industrial production. The aim of the research project is an increase in usability and safety of HRI due to the utilization of Augmented-Reality-Glasses. A system that will significantly simplify the usage of HRI will be developed with the help of AR-Glasses.

Two demonstrations will be realized in this project. Within the first one, the user of a hand-guided robot is shown singularities, which, for example, need to be avoided or bypassed. In addition paths suitable for reaching the goal are displayed. In the demonstrator, whilst in the Teach-mode, the user is also shown additional simulation and CAD datasets in order to gain a better overview over the programming task laying ahead. By this end, assembly robots will become easier to be programmed with the support of the developed methods. The results can be directly used for SMEs programming robots in small lot size assemblies.

Project period

01.01.2018 - 31.08.2020

Is funded by

BMWi / AiF

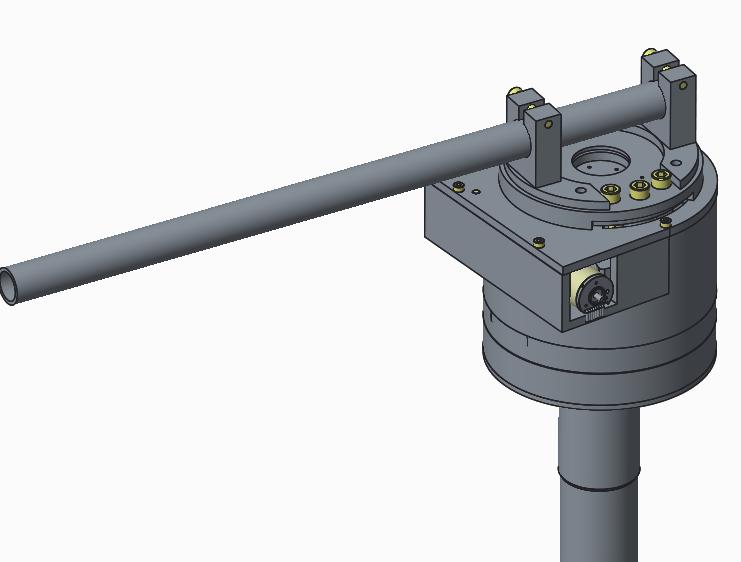

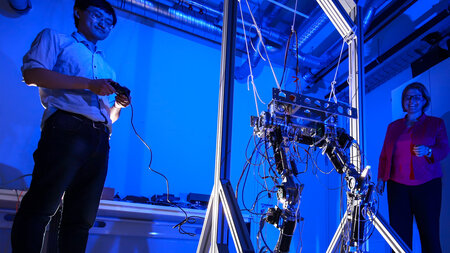

Outline of a humanoid dual-arm-robot with variable programmable, non-linear elastic joints

Content

This research project aims for the development of a new, non-linear and elastic robot joint that can be used among humanoid robots. A prototypical type of a joint is to be developed and equipped with according controllers. The stiffness can be programmed in a variable manner, so that certain settings will make the robotic joint softer, others will make it less flexible resulting in a high stiffnes. As a result, the safety of human-robot-interaction is increased and furthermore allows the implementation of higher forces. The joint being developed could also be used as a knee joint or within a dual arm robot. The prototype of such a joint will be developed and integrated in the humanoid walking robot TUCO.

Project period

01.09.2017 - 31.08.2020

Editor

Hongxi Zhu

Is funded by

ESF-Promotionsförderung

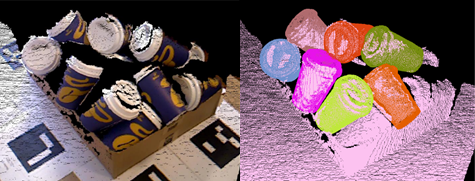

3D Secenery analysis and object recognition for active perception tasks within robotics

Content

The aim is to analyze three-dimensional scene in order to recognize objects and to estimate their 6d poses. With a model based scene analysis we able to estimate the position of objects in cluttered scenes. In order to accelerate and improve the recognition rate deep learning techniques are used.

The perception rate will be increased through CNNs. Interactive perception enables the robot to exploit his own environment through interaction. With the help of this interactive system, ambiguities are resolved and scenes in unstructured environments can be analyzed.

Project period

01.10.2016 - 30.09.2019

Editor

Hannes Kisner

Is funded by

ESF-Promotionsförderung

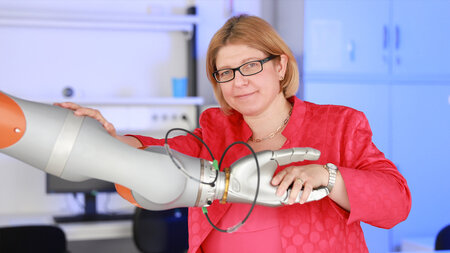

Tactile perception

Content

Within this research project, a resistive force sensor is developed and integrated in a five-finger robotic hand. The resolution of the sensor measures approx 5 mm.

Project period

01.04.2018 - 31.08.2019

Partner

Wessling Robotics, Oberpfaffenhofen

Is funded by

Bayerische Forschungsstiftung

SMErobotics

Content

SME robotics aims for the simplification of robot-supported assembly processes for small and medium sized enterprises (SME) so that they can also draw benefits from the ongoing automation processes. Industrial end users are able to increase their efficiency and, at the same time, keep their ability to deliver customer-specific products in small lot sizes. A new generation of flexible robots and adjustable production machines are able to be integrated in manual production processes and workstations to work side on side with experienced workers.

Project period

This project started at the German Aerospace Center and could be transferred to TU Chemnitz when Dr.-Ing. Ulrike Thomas started here professorship.

01.01.2012 - 30.06.2016

Partner

Fraunhofer IPA, Stuttgart, Comau, KUKA, Danish Technology Center, German Aerospace Center, Lund University, Güdel, Fortiss TUM

Was funded by

siebtes EU Rahmenprogramm (FP7), Fördernummer 287787