Forschungsseminar

Das Forschungsseminar richtet sich an interessierte Studierende des Master- oder Bachelorstudiums. Andere Interessenten sind jedoch jederzeit herzlich willkommen! Die vortragenden Studenten und Mitarbeiter der Professur KI stellen aktuelle forschungsorientierte Themen vor. Vorträge werden in der Regel in Englisch gehalten. Den genauen Termin einzelner Veranstaltungen entnehmen Sie bitte den Ankündigungen auf dieser Seite.

Informationen für Bachelor- und Masterstudenten

Die im Studium enthaltenen Seminarvorträge (das "Hauptseminar" im Studiengang Bachelor-IF/AIF bzw. das "Forschungsseminar" im Master) können im Rahmen dieser Veranstaltung durchgeführt werden. Beide Lehrveranstaltungen (Bachelor-Hauptseminar und Master-Forschungsseminar) haben das Ziel, dass die Teilnehmer selbstständig forschungsrelevantes Wissen erarbeiten und es anschließend im Rahmen eines Vortrages präsentieren. Von den Kandidaten wird ausreichendes Hintergrundwissen erwartet, das in der Regel durch die Teilnahme an den Vorlesungen Neurocomputing (ehem. Maschinelles Lernen) oder Neurokognition (I+II) erworben wird. Die Forschungsthemen stammen typischerweise aus den Bereichen Künstliche Intelligenz, Neurocomputing, Deep Reinforcement Learning, Neurokognition, Neurorobotische und intelligente Agenten in der virtuellen Realität. Andere Themenvorschläge sind aber ebenso herzlich willkommen!Das Seminar wird nach individueller Absprache durchgeführt. Interessierte Studenten können unverbindlich Prof. Hamker kontaktieren, wenn sie ein Interesse haben, bei uns eine der beiden Seminarveranstaltungen abzulegen.

Kommende Veranstaltungen

The Long Tail of Meaning: Compositionality in Machine Learning Models vs. HumansValentin Forch Thu, 28. 5. 2026, 11:30, A10.368 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Natural language and visual scenes are full of rare combinations: unusual object-attribute pairings, uncommon spatial relations, and novel arrangements of familiar parts. Human cognition handles these long tails efficiently. For example, we can understand a description like ?A dog is riding on a pink horse,? even if we have never encountered this situation before. This flexibility is often linked to grammar-like, generative symbolic representations: complex meanings can be built by combining simpler parts according to rules. Machine ?cognition? in current vision-language models works very differently. These models perform impressively on frequent patterns learned from large datasets, but they are much more likely to fail when familiar elements appear in unfamiliar combinations. Such failures suggest limits in compositional generalization: the ability to recombine known concepts systematically in new situations. This seminar focuses on how compositional generalization is evaluated in vision and vision-language models, and how this relates to different cognitive skills and perception. Thus, I aim to shed light on how machine learning models could move beyond pattern matching toward more systematic, continual, and human-like recombination of knowledge. |

Bayesian Without a Cause: How Unsupervised Learning Shapes Multisensory Space RepresentationsValentin Forch Thu, 4. 6. 2026, 11:30, A10.368 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Multisensory spatial perception refers to how the brain combines signals from different senses, such as vision, touch, and proprioception, to make sense of the relative positions of our body and other objects in space. A classic example is the rubber hand illusion, in which seeing a fake hand being touched in synchrony with one?s real, hidden hand can shift perceived hand location and even induce a sense of ownership over the fake hand. In this talk, I present a neurocomputational model of multisensory integration in a rubber-hand-illusion-like setting. The model uses population-coded visual, proprioceptive, and tactile inputs, and learns feedforward and recurrent connections through Hebbian and anti-Hebbian plasticity. I connect this to theories of population coding and show that, under this framework, receptive field width needs to scale with sensory reliability for optimal decoding. I also highlight a problem created by classical Oja normalization: while it stabilizes Hebbian learning, it also forces different sensory inputs to compete for a shared synaptic weight budget, which can impair neural coding of information. I show that applying normalization separately to modality-specific input groups preserves sensory information and improves integration. The final model reproduces Bayesian-causal-inference-like behavior and generates proprioceptive drift patterns under touch and no-touch conditions. More broadly, the work links local synaptic learning rules to probabilistic models of body ownership and multisensory perception. |

Studie zur Performanz von LLM-Agenten mit MCP bei steigender Aufgabenkomplexität am Beispiel der automatisierten TestfallimplementierungVictoria Nöther Thu, 11. 6. 2026, 11:30, A10.368 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Mit der zunehmenden Verbreitung von Large Language Modellen (LLMs) im Arbeitskontext gewinnen auch LLM-Agenten an Bedeutung. Obwohl LLMs beeindruckende Leistungen zeigen, machen sie weiterhin Fehler, was in Kundenprojekten besonders kritisch sein kann. Ein Auslöser für solche Fehler ist unter anderem eine steigende Komplexität der Aufgaben. Was Komplexität im Einzelfall bedeutet, lässt sich jedoch unterschiedlich definieren und ist in der praktischen Anwendung bisher wenig detailliert analysiert. Diese Arbeit fokussiert sich auf die Implementierung von Testfällen im automotive Softwaretest mithilfe eines LLM-Agenten. Dazu bewerten Domänenexperten die Testfälle hinsichtlich ihrer Komplexität. Anschließend implementiert ein eigens entwickelter LLM-Agent diese Testfälle im Softwaretestprogramm ecu.test. Die dafür benötigten Funktionen (Tools) werden dem Agenten über das Model Context Protocol (MCP) bereitgestellt. Die Task Success Rate des Agenten liegt bei 75% für leichte Testfälle und nimmt mit steigender Komplexität ab. Darüber hinaus werden die spezifischen Fehlerursachen des Agenten untersucht. Die Auswertung zeigt, dass Fehler sehr robust an bestimmten Strukturen im Testfall auftreten. Die verwendete Methode zur Einteilung in Komplexitätsstufen erweist sich als geeignet, um die Komplexität einer Aufgabe für einen LLM-Agenten in einer praktischen Anwendungsdomäne intuitiv einzuschätzen. Die zugrunde liegenden Faktoren der Komplexität erweisen sich als vielschichtig und werden im Rahmen dieser Arbeit diskutiert. |

Vergangene Veranstaltungen

Themenfindung für das ForschungsseminarFred Hamker Thu, 7. 5. 2026, A10.368 Beratung über Themen für interessierte Studierende. |

Introduction to Deep Reinforcement Learning and Successor RepresentationsErik Syniawa Thu, 30. 4. 2026, A10.368 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw In this talk I introduce basic deep reinforcement learning (RL) concepts from the ground up, covering imitation learning, policy gradients, and actor-critic methods, before asking what these approaches get wrong. Standard model-free RL conflates two distinct problems: learning where a policy goes and how rewarding those places are. This coupling makes reward adaptation expensive and transfer across tasks structurally difficult. I motivate the Successor Representation (Dayan, 1993) as a principled decoupling of dynamics from reward, and show how Successor Features (Barreto et al., 2017) can scale this idea to large state spaces. I close with a brief outlook on latent self-predictive representations as a modern version of these principles. All concepts are developed intuitively from first principles, (hopefully) making them accessible to everyone. But a small disclaimer: there will be math and there is no elegant way to fully hide a Bellman equation ;) |

Bridging the Latency Gap: A Neurocomputational Model of Predictive RemappingNikolai Stocks Thu, 23. 4. 2026, A10.368 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Visual perception remains remarkably stable across saccadic eye movements despite rapid and large displacements of the retinal image. This stability is thought to rely, in part, on predictive remapping processes that update spatial representations in anticipation of eye movements. However, the precise functional role and temporal characteristics of these mechanisms remain debated. In this study, we investigate predictive remapping within a neurocomputational model of spatial perception based on quasi-multiplicative gain fields. The model integrates retinal input with corollary discharge (CD) and proprioceptive eye-position (PC) signals to generate distributed spatial representations. We emulate a visual foraging paradigm and reproduce receptive field dynamics reported in electrophysiological recordings from lateral intraparietal area (LIP). Our results show that CD-driven predictive remapping is sufficient to account for the rapid transition between pre- and postsaccadic representations observed experimentally, particularly during the early postsaccadic interval. By systematically manipulating model components, we demonstrate that the latency period following saccade offset - during which bottom-up visual input is not yet available - imposes a fundamental computational constraint that necessitates predictive mechanisms. In contrast, presaccadic activity patterns are not well explained by CD-based remapping and are more consistent with attentional or top-down influences. Together, these findings suggest a functional dissociation between presaccadic modulation and postsaccadic continuity. Predictive remapping, driven by corollary discharge, appears to primarily serve to bridge the latency-induced gap in visual processing, ensuring a seamless transition between perceptual states across saccades. |

Reframing Continual Motor Learning in Robotics: Hypernetwork-Driven Control of Spiking Reservoir DynamicsJohannes Hofele Thu, 16. 4. 2026, A10.368 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Der zunehmende Einsatz von Robotersystemen in dynamischen Umgebungen erfordert Regelungsstrategien, die nicht nur präzise, sondern auch anpassungsfähig sind und kontinuierlich lernen können. Herkömmliche Regelungsmethoden und rein datengesteuerte Ansätze haben oft Schwierigkeiten, auf neue Aufgaben zu verallgemeinern, und neigen bei sequenziellem Training zu katastrophalem Vergessen. Inspiriert von biologischen Lernmechanismen untersucht diese Arbeit ein Steuerungsframework, das kontextabhängige Hypernetzwerke mit Spiking-Reservoirs für die Echtzeit-Motorsteuerung kombiniert. Der Ansatz nutzt ein Hypernetzwerk, um die rekurrenten Gewichte eines Spiking-Reservoirs zu generieren, was eine aufgabenabhängige Modulation der Netzwerkdynamik ermöglicht. Die Architektur wird an einem Roboterarm mit zwei Gelenken evaluiert, der mehrere Trajektorienabbildungsaufgaben ausführt, darunter lineare, kreisförmige und Acht-förmige Bewegungen. Die Ergebnisse zeigen, dass das Modell einfache Trajektorien zuverlässig mit hoher Genauigkeit lernen und reproduzieren kann, während komplexere Bewegungen aufgrund der limitierten Ausdruckskraft des Reservoirs weiterhin eine Herausforderung darstellen. Darüber hinaus zeigt das System eine teilweise Fähigkeit, katastrophales Vergessen zu mildern, indem es unter kontinuierlichen Lernbedingungen Schlüsselmerkmale zuvor gelernter Aufgaben beibehält. Insgesamt unterstreicht diese Arbeit das Potenzial von hypernetzwerkgesteuerten Spiking-Systemen für adaptive Motorsteuerung und kontinuierliches Lernen, während sie gleichzeitig aktuelle Einschränkungen hinsichtlich Ausdruckskraft und Skalierbarkeit transparent aufzeigt. |

Informationsveranstaltung für Studenten für das SommersemesterFred Hamker Thu, 9. 4. 2026, A10.368 |

Camera Simulation Credibility Assessment for Automotive Perception ApproachesArya Anup Tue, 31. 3. 2026, https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Synthetic data is widely used for testing and validation for Autonomous driving tasks. This thesis investigates different methods to assess credibility of using synthetic data for real-world perception tasks in autonomous driving. A structured multi-level evaluation framework is conducted on object detection, multi-object tracking and semantic segmentation tasks using the dataset pairs KITTI-VKITTI 2 and Cityscapes-Synscapes. Leave-One-Out (LOO) training splits are used to analyze robustness across varying scene compositions. For assessing input-level similarity between real and synthetic training splits, Frechet Inception Distance (FID) and Kernel Inception Distance (KID) is used. Task-level performance differences are assessed using bootstrap-based statistical equivalence testing. Distribution-level and scene-level deviations are further analyzed to characterize the magnitude and structure of performance shifts. Featurelevel representation similarity between models trained on real and synthetic data is assessed using Centered Kernel Alignment (CKA) metrics. For the selected dataset pairs, the results indicate measurable feature distribution differences between real and synthetic training data that exceed intra-domain baselines. At the task level, aggregate performance metrics such as mAP@0.5, MOTA, and mIoU frequently fail statistical equivalence under stricter tolerance margins, whereas dominant object categories remain comparatively stable in some settings. Performance deviations are consistent across splits, and representation analysis reveals strong alignment in early network layers with increasing divergence in higher task-specific layers. However, no consistent split-wise relationship is observed between input-level similarity, representation similarity, and task-level performance differences. The findings demonstrate that credibility assessment requires integrating multiple complementary evaluation levels, as no single metric fully explains sim-to-real performance behavior. |

Sehen trotz Augenbewegung: Simulation transsakkadischer Orientierungsintegration in einem neurocomputationalen ModellJonas Stefaniak Wed, 18. 3. 2026, A10.368 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Das menschliche visuelle System erzeugt trotz ständiger Augenbewegungen eine stabile Wahrnehmung der Umwelt. Ein zentraler Mechanismus hierfür ist die transsakkadische Integration: die Fähigkeit, Merkmalsinformation über Sakkaden hinweg aufrechtzuerhalten und mit postsakkadischer Information zu einer kohärenten Repräsentation zusammenzuführen. Diese Arbeit implementiert das Experiment von Stewart und Schütz (2019) zur transsakkadischen Orientierungsdiskrimination in ein bestehendes neurocomputationales Modell des parietalen Cortex (TU Chemnitz, Professur Künstliche Intelligenz). Das Modell kombiniert einen dorsalen Pfad zur räumlichen Koordinatentransformation über Gain-Fields mit einem ventralen Pfad zur Merkmalsverarbeitung und wurde um orientierungsselektive Entscheidungspopulationen (V4_L, V4_R) erweitert. Die Simulationsergebnisse zeigen qualitativ zwei zentrale Muster der experimentellen Vorlage: einen transsakkadischen Vorteil gegenüber prä- und postsakkadischen Einzelpräsentationen sowie eine Abhängigkeit der Integration vom Referenzrahmen (spatiotop > retinotop). Der zugrundeliegende Mechanismus liegt in der Koordinatentransformation über LIP und Xh: Prä- und postsakkadische Information konvergiert nur dann, wenn der Stimulus spatiotop konstant bleibt. Da der Corollary-Discharge-Pfad präsakkadischen Input benötigt, um über das LIP Aktivität an der Zielposition aufzubauen, wurde für die postsakkadische Bedingung ein Platzhalter über eine zusätzliche Population implementiert, die im LIP einen konstanten Aufmerksamkeitsfokus unabhängig vom präsakkadischen Stimulus aufrechterhält. |

Exploring Reinforcement learning strategies in automated wheel truingToni Rozsahegyi Wed, 18. 3. 2026, A10.368 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Spoked bicycle wheel truing, correcting rim deviations by adjusting spoke tensions, presents a challenging testbed for reinforcement learning due to its high-dimensional state space, non-local action effects, and multi-objective constraints. The central question is whether RL agents can learn effective truing policies in simulation, comparing value-based (Rainbow DQN), policy-gradient (PPO), and model-based (TD-MPC2) approaches across different state representations, action spaces, and reward functions. A systematic ablation study reveals substantial performance differences across these design choices, with some results challenging conventional intuitions about state representation and action space formulation. The insights from these experiments inform the selection and optimization of a candidate policy for future sim-to-real transfer to physical truing machines. |

OBM Pinpointing with Machine LearningNandini Mehta Mon, 16. 3. 2026, https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Euro 7 introduces stricter real-driving emission requirements and shifts diagnostics from component-level fault detection to system-level emission monitoring. Although On-Board Monitoring (OBM) can detect excessive emissions, it often cannot determine which subsystem is responsible, particularly when faults are soft, combined, or distributed. This thesis addresses that challenge by proposing a compact data-driven method for subsystem-level fault pinpointing. Using a large multi-vehicle dataset of quasi-stationary diesel powertrain snapshots, the study applies feature selection and correlation analysis to reduce the input space. A baseline autoencoder is first used for anomaly detection, followed by a supervised contrastive autoencoder that learns a structured latent space where similar fault families cluster together and different faults are clearly separated. A lightweight classifier is then trained on these embeddings to identify emission-relevant subsystems. The proposed method achieves 94.16% accuracy on more than 370,000 validation samples, demonstrating a practical and interpretable solution for Euro 7 OBM-OBD diagnostic integration. |

Development of Deep Learning Methods for Estimating Facial Landmarks in an In-Cabin Sensing Ground Truth SystemGaurav Rana Tue, 10. 3. 2026, https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw This thesis investigates development of deep learning methods for estimating facial landmarks in an in-cabin sensing ground truth system using facial landmarks detection in near-infrared (NIR) imagery for in-cabin driver monitoring systems. Reliable landmark localisation is essential for downstream safety critical functions such as blink detection, yawning detection, and head pose estimation. However, models trained primarily on RGB data often struggle with illumination variations and domain differences present in NIR environments. To address this challenge, a hybrid deep learning architecture was developed consisting of two stages. The first stage adapts the Infrared face detector for face localisation, while the second stage employs a stacked hourglass network to estimate facial landmarks through heatmap regression. The model was evaluated using two output settings : a full 68 landmarks scheme and a reduced set of 30 critical landmarks designed for driver monitoring applications. A dedicated NIR dataset with subject-level splits was created, and the models were optimized using targeted training strategies and NIR-specific data augmentation. Experimental results show that the proposed approach significantly improves landmark localisation accuracy compared to pre-trained baselines, while also reducing failure rates. In particular, the reduced landmark configuration provides highly accurate and stable landmark estimates suitable for real-time cabin sensing applications. |

Biologisch Inspirierte Active VisionJan Chevalier Tue, 17. 2. 2026, A10.367 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Das aktive Sehen bei Robotern kann durch biologisch inspirierte Ansätze gelöst werden. Es werden 2 verschiedene Ansätze für das selbständige Zurechtfinden eines Roboters vorgestellt. Einmal ein Modell, welches sich selbst ein internes Modell der Umwelt macht ("A Hierarchical System for a Distributed Representation of the Peripersonal Space of a Humanoid Robot", M. Antonelli, 2014). Außerdem ein Modell, welches an 3 verschieden Roboterköpfen getestet wurde ("A Portable Bio-Inspired Architecture for Efficient Robotic Vergence Control", A. Gibaldi, 2017). Des Weiteren wird ein Ansatz der biologisch inspirierten Objekterkennung vorgestellt ("Active Vision : on the relevance of a Bio-inspired approach for object detection", K. Hoang, 2019). |

Part 2: Why did I drive to work on my day off: Perspectives on goal-directed and habitual behavior with implications for future AI systemsErik Syniawa Tue, 3. 2. 2026, A10.208.1 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Although modern AI systems excel at complex tasks such as language generation, they lack the adaptive efficiency that characterizes human behavior. Humans seamlessly balance deliberate planning with automatic responses depending on context and computational demands. In this talk we will discuss recent frameworks suggesting that goal-directed and habitual control are integrated rather than separate systems. Butz et al. (2025) propose a computational account where context inference optimizes cognitive effort, while Hamker et al. (2025) propose an anatomical perspective through interacting cortico-basal ganglia loops and learned shortcuts. I will outline the potential synergies and incompatibilities that could arise in integrating these frameworks. Finally, I will discuss how principles from these frameworks particularly context integration and shortcuts could potentially inspire more efficient AI architectures and how these principles relate to already published AI approaches. |

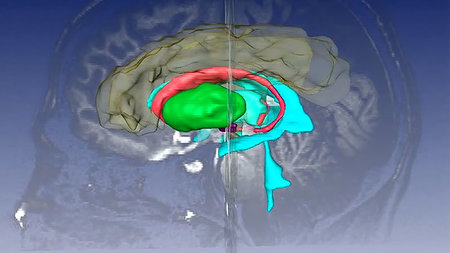

Interacting cortico - basal ganglia - thalamocortical loops in behavioral controlFred Hamker Tue, 27. 1. 2026, A10.208.1 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw I will present a new framework explaining how the brain controls goal-directed behavior, habits, and their learning processes. I propose that goal selection is implemented through the limbic loop, which connects the hippocampus to the ventral basal ganglia. This loop retrieves goal plans from memory, allowing the brain to 'virtualize' potential outcomes based on prior experiences. Once a goal is selected, plan execution is carried out by other, hierarchically organized cortico-basal ganglia circuits, with lower-level loops being guided by objectives set by higher-level ones. I will also present findings from a neuro-computational model applied to a well-known two-stage decision-making task. Our results highlight the central role of hippocampal replay in learning and behavior, particularly in distinguishing between model-based and model-free learning strategies. |

Explaining the Interaction Between Criticality and Evolutionary Success in Recurrent Neural Network Models for Dynamical ControlIdris Hafsa Tue, 20. 1. 2026, A10.208.1 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Neural networks operating in real-world environments are often exposed to noisy and unpredictable inputs, which can lead to unstable behavior. This work investigates how the dynamical regime of recurrent neural networks influences their robustness under such perturbations, with a focus on the concept of criticality. Recurrent networks were trained in a controlled environment and subjected to external disturbances to evaluate their stability and performance. The results indicate that classical indicators of criticality do not provide a reliable predictor of robustness or performance. Instead, performance is strongly shaped by emergent behavioral strategies and task-specific dynamics, highlighting the need for alternative approaches to studying stability and robustness in recurrent neural systems. |

Why did I drive to work on my day off: Perspectives on goal-directed and habitual behavior with implications for future AI systemsErik Syniawa Tue, 16. 12. 2025, A10.208.1 and https://webroom.hrz.tu-chemnitz.de/gl/mic-cv7-ptw Although modern AI systems excel at complex tasks such as language generation, they lack the adaptive efficiency that characterizes human behavior. Humans seamlessly balance deliberate planning with automatic responses depending on context and computational demands. In this talk we will discuss recent frameworks suggesting that goal-directed and habitual control are integrated rather than separate systems. Butz et al. (2025) propose a computational account where context inference optimizes cognitive effort, while Hamker et al. (2025) propose an anatomical perspective through interacting cortico-basal ganglia loops and learned shortcuts. I will outline the potential synergies and incompatibilities that could arise in integrating these frameworks. Finally, I will discuss how principles from these frameworks particularly context integration and shortcuts could potentially inspire more efficient AI architectures and how these principles relate to already published AI approaches. |