Neurokognition I

WS2025

Information

Start of exercises: October 20nd - 23th

- exercise registration via OPAL (for students without a FRIZ account)

There is no mandatory day / group for students of certain degree courses - exercise groups may be chosen freely.

Additional lecture videos can be found here and here.

Exam Information

Dates: Februar 16./19./26., März 23./30./31.

Location: Room A11.216 (old 1/B216)

Appointment booking: link

Instructions:

- Book an appointment: See link above. Select a single appointment by clicking on "Buchen".

- "Name" field: Enter "Name, student ID number, n1", or if you want to do the exam in Neurocognition 2 enter "Name, student ID number, n2".

- "E-Mail" field: Enter your email.

- After booking: Send an email to susan.koehler@informatik.tu-chemnitz.de using the same email address, stating your name, student id number, your chosen appointment, and which exam (neurocognition 1 or 2) you will do.

Selecting an appointment in the booking list does not replace the registration of your exam at the Central Examination Office (Zentrales Prüfungsamt)!

Inhalte

Die Veranstaltung führt in die Modellierung neurokognitiver Vorgänge des Gehirns ein. Neurokognition ist ein Forschungsfeld, welches an der Schnittstelle zwischen Psychologie, Neurowissenschaft, Informatik und Physik angesiedelt ist. Es dient zum Verständnis des Gehirns auf der einen Seite und der Entwicklung intelligenter adaptiver Systeme auf der anderen Seite.

In Neurokognition I werden vorwiegend realistische neuronale Modelle und Netzwerkeigenschaften sowie das Lernen vorgestellt. In den Übungen werden die Algorithmen der Vorlesung mittels einer Implementierung in MATLAB vertieft. Kennnisse in MATLAB sind keine Voraussetzung für die Teilnahme, sie können bei Bedarf vor dem Beginn erworben werden. Dafür werden ein Skript zum Selbststudium sowie eine praktische Übungseinheit in den ersten Vorlesungswochen angeboten.

Die Neurokognition II beleuchtet komplexere Modelle von Neuro-psychologischen Prozessen, mit dem Ziel neue Algorithmen für intelligente, kognitive Roboter zu entwickeln. Themen sind Wahrnehmung, Gedächtnis, Handlungskontrolle, Emotionen, Entscheidungen und Raumwahrnehmung. Zum tieferen Verständnis erfordern die Übungen auch praktische Aufgaben am Rechner.

Randbedingungen

Empfohlene Voraussetzungen: Grundkenntnisse Mathematik I bis IV

Prüfung: Mündliche Prüfung

Ziele: Fachspezifische Kenntnisse der Neurokognition

Syllabus

Introduction

The introduction motivates the goals of the course and basic concepts of models. It further explains why computational models are useful to understand the brain and why cognitive computational models can lead to a new approach in modeling truly intelligent agents.

The styles of computation used by biological systems are fundamentally different from those used by conventional computers: biological neural networks process information using energy-efficient, asynchronous, event-driven methods. They learn from their interactions with the environment, and can flexibly produce complex behaviors. These biological abilities yield a potentially attractive alternative to conventional computing strategies.

Neurokognition I provides an introduction to computational modelling at the neural level. Starting with basic properties of electrical circuits different formal neuron models are introduced. The second focus is devoted to learning and plasticity, where different learning rules are introduced and discussed. Finally different network mechanisms are introduced laying the ground for complex large-scale models of the brain.

Part I Model neurons

Part I describes the basic computational elements in the brain, neurons. A neuron is already an extremely complicated device. Thus, models at different abstraction levels are introduced.

Biophysical models of single cells are constructed using electrical circuits composed of resistors, capacitors, and voltage and current sources. This lecture introduces these basic elements.

Integrate- and fire models model the membrane potential with a ODE, but do not explicitly model the generation of an action potential. The generate an action potential when the membrane potential reaches a particular threshold.

The membrane potential controls a vast number of nonlinear gates and can vary rapidly over large distances. The equilibrium potential is the result of electrostatic forces and diffusion by thermal energy and can be described by the Nernst and Goldman-Hodgkin-Katz equations.

The Hodgkin-Huxley (H-H) theory of the action potential, formulated 60 years ago, ranks among the most significant conceptual breakthroughs in neuroscience (see Häusser, 2000). We here provide a full explanation of this model and its properties.

The Hodgkin-Huxley model captures important principles of neural action potentials. However, since then several important discoveries have been made. This lecture presents of few important ones including more detailed models.

The synapse is remarkably complex and involves many simultaneous processes. This lecture explains how to model different (AMPA, NMDA, GABA) synapses and include them in the integrate-and-fire model.

What is now a good model for large-scale computations using thousands or millions of interconnected neurons? Whereas integrate-and-fire neurons do not capture sufficiently well the firing patterns of real neurons, Hodgkin and Huxley type neurons are computationally very demanding. Izhikevich (2003) developed a model with only a few parameters that can capture sufficiently well firing patterns of real neurons. Based on this model and others, Brette and Gerstner (2005) developed an adaptive exponential integrate-and-fire model and compared it to a Hodgkin and Huxley type model. The Izhikevich and the adaptive exponential integrate-and-fire models are good candidates for large-scale models with realistic neural dynamics and firing patterns. See also the review of Izhikevich (2010).

Additional Reading: Izhikevich, E.M. (2004) Which Model to Use for Cortical Spiking Neurons? IEEE Transactions on Neural Networks, 15:1063-1070.

As explained in previous lectures the basic transfer from one neuron to another set of neurons relies on action potentials (spikes). However, for several phenomena is it sufficient to model just the spike rate of neurons and not the individual spikes. This lecture introduces to basic rate code models.

As outlined above, single neurons but also coupled neurons can be well described as a dynamical system. Thus, all concepts of dynamical systems can be applied to neural models. Here I give a brief introduction into concepts of stability analysis and explain terms such as birfucation, limit cycles and phase-plane methods.

Although it is generally agreed that neurons signal information through sequences of action potentials, the neural code by which information is transferred through the cortex remains elusive. In the cortex, the timing of successive action potentials is highly irregular. This lecture explains under which conditions such firing pattern can be modeled by a poisson process.

Additional Reading: Shadlen MN, Newsome WT. (1994) Noise, neural codes and cortical organization. Curr Opin Neurobiol. 4:569-79.

Part II Learning

Learning is a fundamental property of the brain (see Markram, Gerstner, Sjöströma, 2011).

This lecture introduces into the most important concepts of synaptic plasticity.

Early concepts of modeling learning have been described in form of rate-based learning rules. This chapter describes the most important ones such as Hebbian, Oja and BCM learning.

Hebbian plasticity is probably insufficient to explain activity-dependent development in neural networks, because it tends to destabilize the activity of neural circuits. We here discuss how circuits can maintain stable activity states by mechanisms of homeostatic plasticity.

Hebbian learning in rate coded networks do not capture effects of neural plasticity that depend on the exact timing of pre- and postsynaptic action potentials. We introduce spike-time dependent plasticity rules and discuss how well they account for recent data. In particular, it has been observed that simple spike-time dependent plasticity rules to not account well for learning in the presence of multiple pre- and postsynaptic action potentials and for firing rate dependent effects of plasticity. Finally, the lecture provides an overview of a novel learning rule that accounts for much data while keeping the rule simple (see Clopath, Büsing, Vasilaki, and Gerstner, 2010 as well as Clopath, and Gerstner, 2010, Larisch, 2019 for details).

Node perturbation is an alternative to gradient descent to optimize objective functions.

Additional Reading: Miconi, T. (2017) Biologically plausible learning in recurrent neural networks reproduces neural dynamics observed during cognitive tasks. eLife 6. doi:10.7554/elife.20899

Gradient-based learning, Node-perturbation rule, Node-perturbation by Exploratory Hebb, Example: Delayed nonmatch-to-sample

Place fields, Anticipatory Sequences (pre-play), Theta frequency in the hippocampus, Theta phase precession, Learning lateral connections in CA3, Replay

Part III Networks

Important properties in the brain emerge from the interactions between neurons. This lecture provides on overview of properties that appear in local, lateral and feedback circuits, in particular Dynamic Neural Fields and Balanced Excitation and Inhibition Networks.

Additional Reading: Grossberg, S (1988) Nonlinear Neural Networks: Principles, Mechanisms, and Architectures. Neural Networks, 1:17-61. (only part 1-15)

Space can be represented in different coordinate frames, such as eye-centered for early visual processing and head-centered for acoustic stimuli. It appears that the brain relies on different coordinate systems to represent the space in our external environment. Thus, this lecture explains how stimuli can be transformed from one coordinate system to another.

Suggested Reading:

Cohen & Andersen (2002). Nature Rev Neurosci 3:553-562.

Pouget, A., and Snyder, L. (2000) Computational approaches to sensorimotor transformations. Nature Neuroscience. 3:1192-1198.

Deneve S, Latham PE, Pouget A. (2001) Efficient computation and cue integration with noisy population codes. Nat Neurosci., 4:826-31.

Pouget A, Deneve S, Duhamel JR. (2002) A computational perspective on the neural basis of multisensory spatial representations. Nat Rev Neurosci., 3:741-7.

Salinas E, Abbott LF (1996) A model of multiplicative neural responses in parietal cortex. Proc Natl Acad Sci U S A, 93:11956-61.

Part IV Mechanisms

Suggested Reading:

Rovamo J, Virsu V (1983) Isotropy of cortical magnification and topography of striate cortex. Vision Res 24: 283-286.

Part V Neural Simulators

This lecture provides an overview about neural simulators.

Exercises

Exercise I.0: Matlab introduction |

Files: In-depth skript |

Exercise I.1: Euler's method |

Links: Numerical solution of simple ODE and the h tau interaction |

Exercise I.2: Integrate-and-fire model |

Links: Stepwise solution of integrate and fire neuron Files: exerciseI.2.zip Sample Solution: Part A, Part B, Notes on the Solution |

Exercise I.3: Hodgkin and Huxley model |

Files: exerciseI.3.zip, Solution |

Exercise I.4: Synapses |

Files: exerciseI.4.zip Solution |

Exercise I.5: Hybrid Spiking Models |

Files: initial file, spiking patterns Solution |

Exercise II.1: Hebbian Learning |

Files: exerciseII.1.zip Solution Matlab |

Exercise II.2: Anti-Hebbian learning |

Files: exerciseII.2.zip Solution Matlab |

Exercise II.3: Voltage-based STDP |

Files: exerciseII.3.zip Solution |

Exercise III.1: Radial Basis Function Networks |

Files: exerciseIII.1.zip Solution |

-

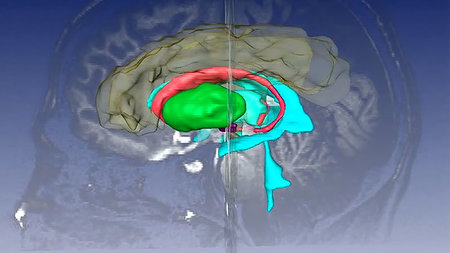

Welche Schaltkreise im Gehirn bestimmen unser Alltagsverhalten?

Vom dualen System zum Netzwerk: Forschungsteam aus Chemnitz, Santiago de Chile und Magdeburg hat eine neue Sicht auf die Handlungssteuerung im Gehirn und ihren Nutzen für die Entwicklung neuroinspirierter KI …

-

Gehirn-Schluckauf besser verstehen

Projektstart für deutsch-israelisch-amerikanisches Kooperationsprojekt zur Erforschung von Tourette- Ursachen …

-

Mit SmartStart 2 auf dem Weg zur Promotion

Oliver Maith, der in Chemnitz Sensorik und kognitive Psychologie studierte, war in einem wettbewerblichen Verfahren zur Promotionsförderung erfolgreich und forscht nun im Bereich der Computational Neuroscience …

-

Artikel enthält Video

Artikel enthält Video

RoboDay 2026: Junge Robotik-Talente zeigen ihr Können

Regionaler Vorausscheid zur World Robot Olympiad vereint am 30. Mai 2026 mit einem Begleitprogramm Kinder und Jugendliche, die spielerisch den Umgang mit Robotertechnologie, KI und autonomem Fahren erleben und die TU Chemnitz kennenlernen können …

-

Human- und Sozialwissenschaften Buchvorstellung: Umkämpfte Deutungshoheit. Wie rechte Narrative die Demokratie …

Vorstellung des gleichnamigen Buchs von Susanne Rippl und Christian Seipel …

-

Philosophische Fakultät Affektive Polarisierung: Ursprünge, Effekte und Verbindungen zum Populismus

Einführung und Relevanz des Themas, Definition und Ursprünge der …

-

Naturwissenschaften 55. Chemiewettbewerb "Julius Adolph Stöckhardt" an der TU Chemnitz

Das Thema des Chemiewettbewerbes lautet: "Aluminium, Kupfer und Wolfram …

-

TU Chemnitz TUCsommernacht

Tanz und gute Laune bis Mitternacht: Verschiedene Musik- und kulinarische Angebote sorgen im …

-

TU Chemnitz Selbstfahrende Autos: Technik und Zukunft hautnah erleben

Kannst du dir vorstellen, dass Autos in Zukunft ganz allein fahren? In …

-

TU Chemnitz IMMATRIKULATIONS- UND AUFTAKTFEIER 2026/2027

Traditionsgemäß werden jedes Jahr zum Beginn des Wintersemesters die neuen …