Simulation of neuronal agents in virtual reality

The goal of this research project is to simulate integrative cognitive models of the human brain as developed in other projects to investigate the performance of cognitive agents interacting with their environment in virtual reality. Each agent has human-like appearance, properties and behavior. Thus, this project establishes a transfer of brain-like algorithms to technical systems.

System

The neuronal agents and their virtual environment (VR) are simulated on a distributed and specialized device. The agents have all main abilities of a human, they are capable to execute simple actions like moving or jumping, to move their eyes and their heads and to show emotional facial expressions. Agents learn their behavior autonomously based on their actions and their sensory consequences in the environment. For this purpose, the VR-engine contains a rudimentary action- and physic-engine. Small movements (like stretching the arm) are animated by the VR-engine, while the neuronal model rather controls high-level action choices like grasping a certain object.

To investigate the interactions of the neuronal agents with human users, the world will include user-controlled avatars. The persons will be able to receive sensory information by appropriate VR-interfaces, for example visual information will be provided by a projection system. The users will also be able to interact with the environment, the necessary movement information will be gathered by tracking their face and their limbs. This face tracking is especially used to detect the emotions of the user to investigate the emotional communication between humans and neuronal agents.

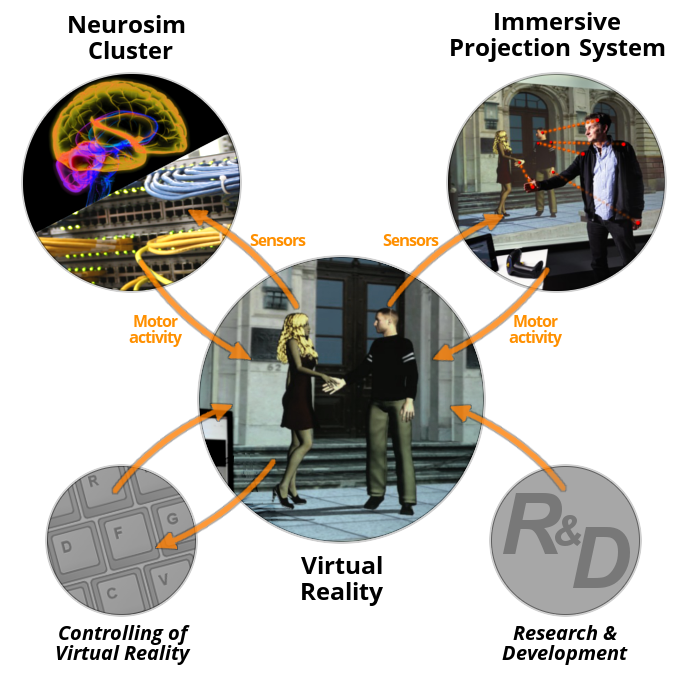

Technically, the device consists of several sub parts: a virtual reality engine, a neurosim cluster simulating the agents brain and an immersive projections system to map the human users to avatars. The cluster itself will be able to simulate several neuronal models in parallel which allows us to use multi-agent setups.The cluster will consist of the NVidia CUDA acceleration cards (hardware layer) and the neuronal simulator framework ANNarchy (software layer).

Example

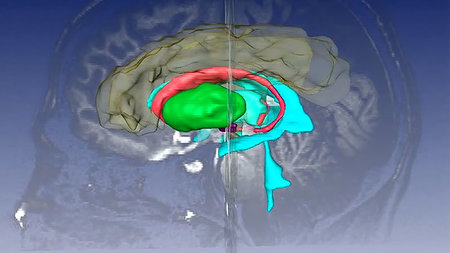

"Vision and Memory" setup originally developed in the EU SpaceCog project (FP7, Future Emerging Technologies: Neuro-bio-inspired systems) and further improved within the initiative Productive Teaming The task of the v(irtual) Felice is to walk from a position at the entry of the room to a predefined object, localise and memorise it. She then walks to a different position in the room. From there, she recalls where the object is located, walks back to it and performs an object localization, guided by spatial attention. Large parts in this simulations are based on a brain inspired neural network including the hippocampus, the ventral and dorsal visual streams for object perception and the frontal eye fields for gaze shifting.

Associated projects

Kompetenzzentrum "Virtual Humans"

The digital modeling of human properties and behavior is challenging, but has the opportunity for many industrial applications like product and process ergonomics, medicine, sport simulations and entertainment. The acceptance of such a virtual human increased significant with the number of modeled features, whereas the most important properties are the body, movements and a control system considering the current task and the emotional state of the virtual human. The competence center has the goal to improve the usability of such models and to transfer the research results to companies in the nearby region.

Graduiertenkolleg "CrossWorlds - Connecting Virtual and Real Social Worlds"

The Research Training Group "Connecting Virtual and Real Social Worlds" has the main goal to overcome the current constraints of media-mediated communication. This communication is always restricted in comparison to the real-world. To solve this problem, the group will study new ways of interaction and communication by connecting the virtual and real social world.The research program subdivides the field into: communication, emotions, sensomotorics, and E-learning.

As part of this initiative we develop brain-inspired approaches that allow for a "teaming" between people and production systems, e.g. in form of cognitive agents.

Selected Publications

Burkhardt, M., Bergelt, J., Goenner, L., Dinkelbach, H.Ü., Beuth, F., Schwarz, A., Bicanski, A., Burgess, N., Hamker, F.H. (2023)

A Large-scale Neurocomputational Model of Spatial Cognition Integrating Memory with Vision

Neural Networks, doi: 10.1016/j.neunet.2023.08.034